AI ANIMATION PRODUCTION

AI Animation Tools

Discover AI Animation

A SITE FOR AI ANIMATION CREATIVES, STUDIOS & CLIENTS

Share the article:

AI Script Writing

Chat GPT / Grammarly

Not feeling up to writing your own work? Get a jump start with script writing by using Chat GPT to quickly generate a script from a short text prompt (or revise your own work). It can also provide a shot list and examples of scene direction.

Grammarly is also a great tool for refining and improving grammar, tone and more.

AI Image Creation

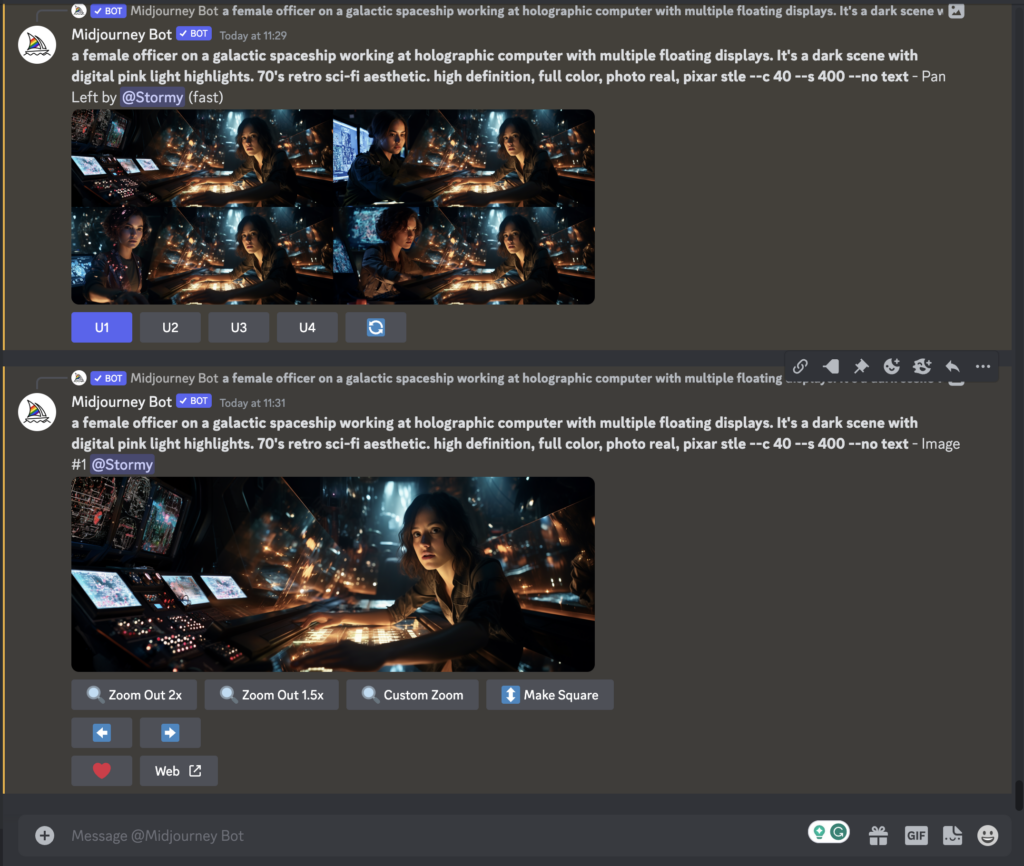

Midjourney

Currently the leader in producing quality images, Mid Journey is an AI image generation solution that runs on Discord.

Using text prompts or image references with a text prompt you can create stylisitc and hyper realistic imagery.

It generates 4 images, that you can then use to create new versions, before upscaling and then have the option to pan/zoom the image to adapt it further.

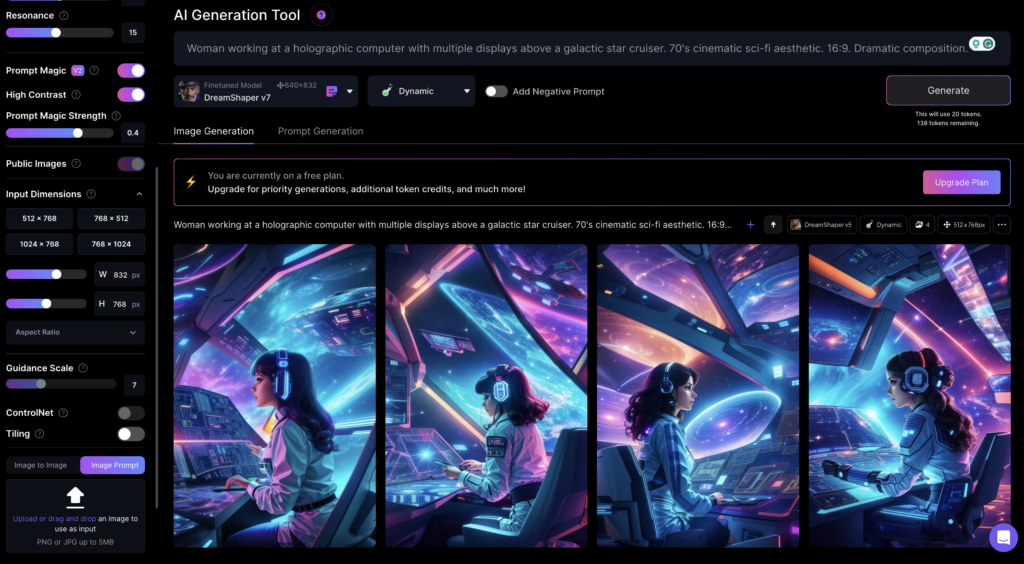

Leonardo AI

Another highly recommended AI generative image tool is Leonardo AI.

It produces images that rival the quality of Mid Journey, plus it has a fantastic user interface to create images that match your criteria.

You can check it out for free today. We've not used it loads ourselves yet, but intend on trying out more on upcoming AI art generation projects.

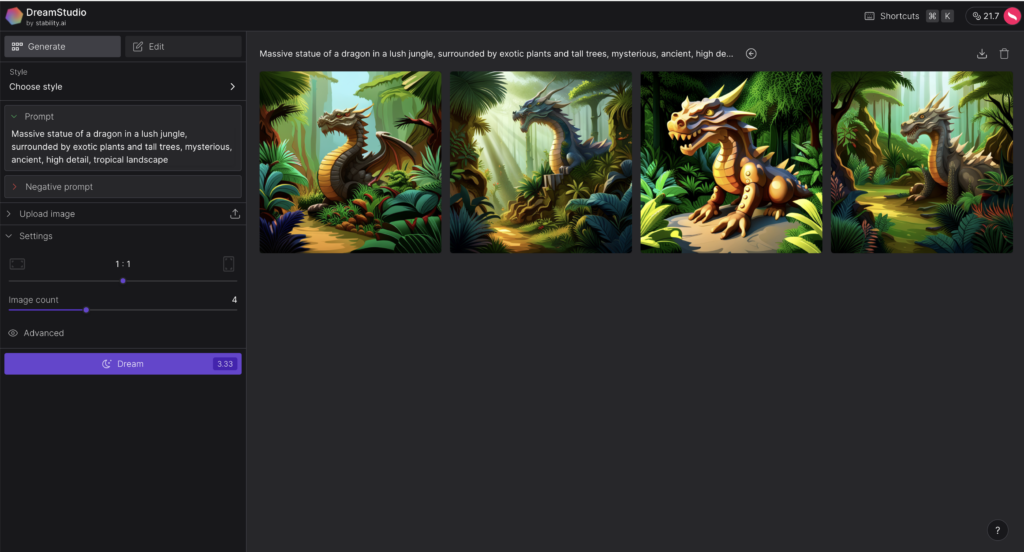

Stable Diffusion

Stable Diffusion is the most flexible AI image generator currently available and it is also entirely open source.

As a result it's also being used as the basis for other 3rd party image generators. Recent updates to model are producing images that getting closer to the quality of output from Midjourney. You can try out the new SDXL model that's creating stunning imagery via Stability AI's Dreamscope site.

You can train your own models based on your own dataset to get it to generate exactly the kind of images you're after. Plus unlike the other options here you can run it on your very own PC and generate as many images as you want, helping to bring generation costs down (assuming you have a suitable spec PC to hand).

3D Models with AI

Meshy AI

One of our favourite 3D AI tools currently available, shown in multiple tutorials on our Youtube channel.

Meshy allows you to generate high quality 3D meshes via a text prompt or image reference.

It also applies high quality textures, plus has leading AI tools to fix textures or fully retexture a model.

Plus recently they've added animation tools, so you can rig biped or quadruped characters and apply walking/running animations.

Finally it supports the download of popular 3D formats to take the models into your preferred 3D package for use in full animation workflows, image creation or computer game design.

Try it out for free today.

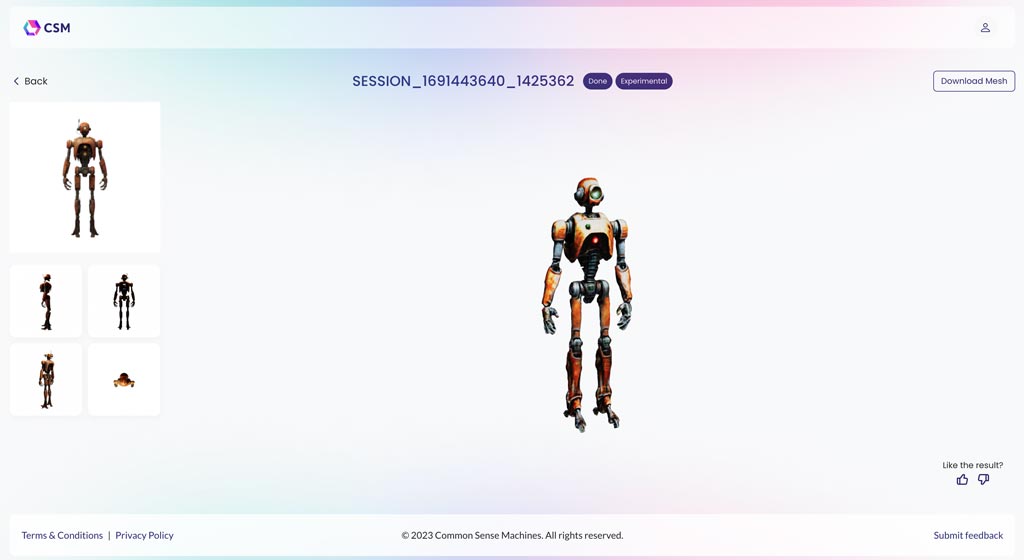

CSM

A relatively new arrival to the AI world (at least as far we're aware). CSM which stands for Common Sense Machines. Allows you to turn a 2D image of an object or character in a 3D model with a single click of a button.

You can upload an image, review a few still images with give (semi-accurate) indication of how the model will turn out and press submit. It then currently takes a few hours to process before you can download an often impressive 3D file to use in your work.

The quality is already very impressive and improving rapidly.

It's also currently free to use with paid options no doubt on the horizon.

I used CSM in this full AI 3D animated scene tutorial, view on Youtube here.

Tripo 3D

Tripo 3D is another new 3D AI model generation platform offering high quality mesh creation from a text or image prompt.

The meshes look good quality plus they offer some animation options to apply to your generated character models.

Kaedim

Another AI tool that allows for the generation of 3D models from one (or multiple) 2D images is Kaedim.

I've not yet had a chance to try this out, but the site and tutorials show a very promising platform for the creation of good quality models with the possibility of further customisation after the initial generation.

There are currently paid trials and the option for a recurring monthly fee which varies depending on the amount of work you expect to generate.

AI 3D Animation

MOOTION

Mootion is one of the leaders in the text to 3D character animation space. With updates quickly rolling out. They also offer Stable Diffusion style animation, with their 3D animation proving the reference to create truly unique content.

It creates two generations in around a minute. Where you can instantly preview the animation and then either generate more versions or download a 3D FBX file to take into your 3D package of choice. Or download a video file with transparency so you can quickly composite it into a project using something like Adobe After Effects.

The FBX files come with a fully keyframed character armature, which, with a bit of know how, you can transfer the animation to another model, tweak the animation manually and expand from there.

It's a really impressive platform already and will evolve and improve in the coming months as well.

Wonder Studio

Not a phrase we use lightly but this is simple a game changer.

Wonder Studio by Wonder Dynamics allows you to take filmed footage of an actor and replace them with a CGI character (of your own or from their growing library).

Their software uses AI to track the movement of the actor, paint out the background giving you a clean plate, add in your chosen 3D CGI character, lights them to perfectly match the scene and render it out. All done with a few clicks and delivers (so far from our tests) exceptionally high quality output.

You also have the option to download an FBX file to use in your chosen 3D software to further improve the scene or use the animated character in a new scene of your own.

With higher paid options for users to export blender files, so you can save facial animation, (*We've not yet tested this feature) as well as a Clean Plate and Alpha Masks.

Plus they'll soon be adding the ability to export a camera track and separate character pass.

It also has a simple but smart and effective online video editor to trim your clips before assigning characters to each clip.

All in it's a very impressive suite of AI tools implemented in a modern and easy-to-use way and designed to work well as part of a larger CGI animation production workflow.

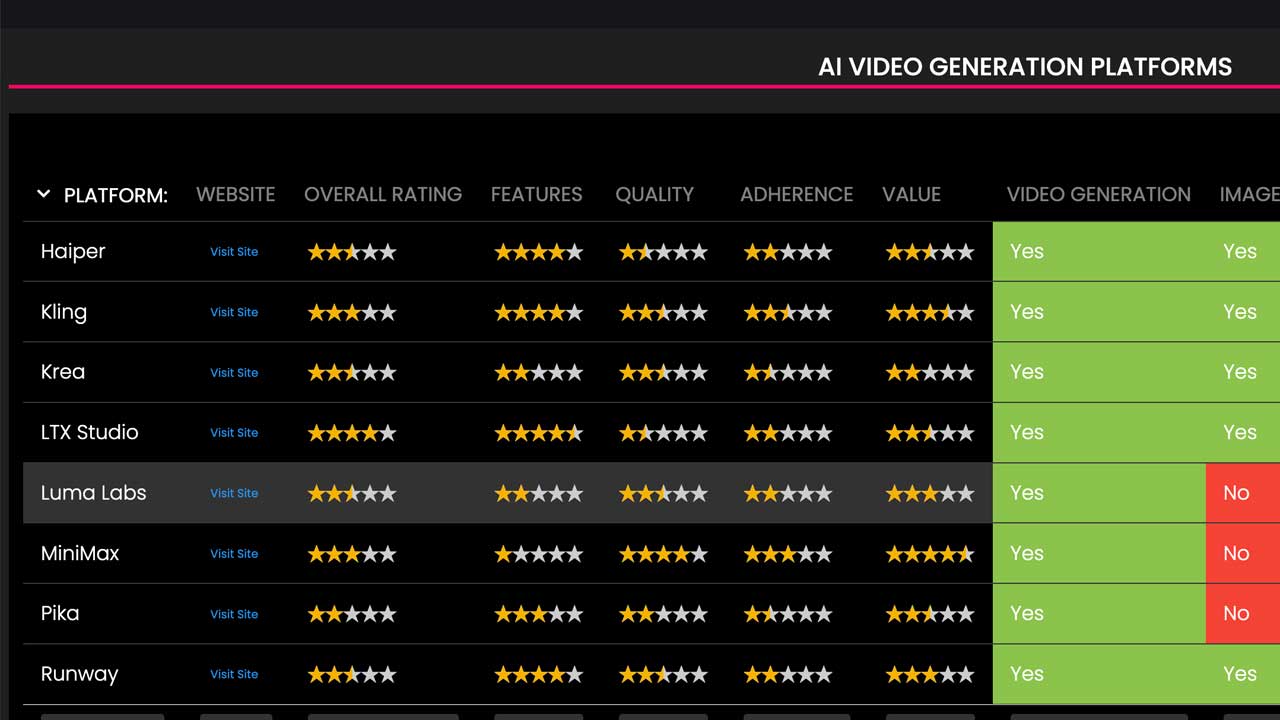

AI Video Generator Reference Table

I've collated a reference table with all the leading AI video generation platforms. Breaking down their functionality, quality, adherence, pricing and more.

Check it out for a quick guide on which AI video generator might be best for your needs.

AI Animation / Video Gen

Krea

Create AI video/animation from a text prompt & more

One of the new stars in the AI creative space. Krea not only offers some of the best AI video generation (competing with Runway and Kling).

It offers real time image generation, unique video upscaling/enhancing.

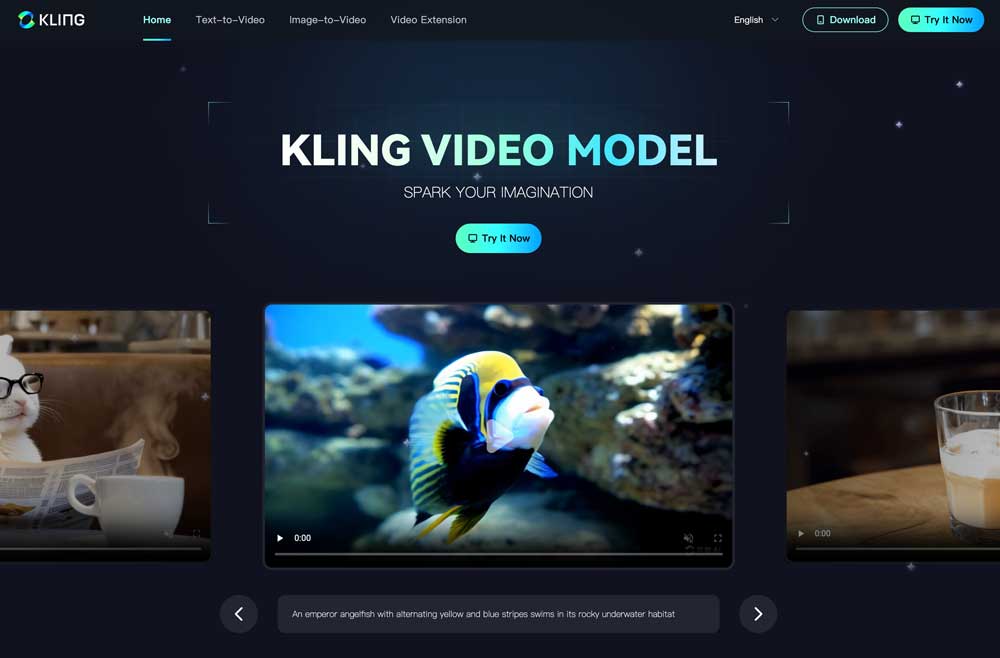

Kling

Create AI video/animation from a text prompt & more

One of the newer AI video generation platforms Kling, first released in China is now available globally.

It produces some of the best AI video generations and is expected to rival the quality from OpenAI's Sora (*when released), plus is a worthy competitor to RunwayML.

Runway ML

Create video/animation from a text prompt & more

The first platform to come out with really high quality, and potentially useable AI animation or AI video generation was Runway ML.

As well as a suite of AI powered tools to assist with video production, i.e. scene extension, tracking, rotoscoping, static image generation and video editing.

They also released powerful video generation tools named Gen-1, Gen-2 and now Gen 3.

Gen-1 allows you to use AI text and image prompts to adapt filmed (or animated) footage to create compelling results. i.e. turning filmed footage of a man walking through his flat, into a female adventurer in a dense jungle at night.

Gen-2 allows you to create stylised or realistic cinematic footage hrough the use of an image, text or text+image prompt. Creating 100% AI-generated visuals. With camera controls and the unique motion-brush to guide motion.

Gen-3 is there latest and best model producing incredible results. It's expected that camera controls and motion brush will be added to Gen-3 soon.

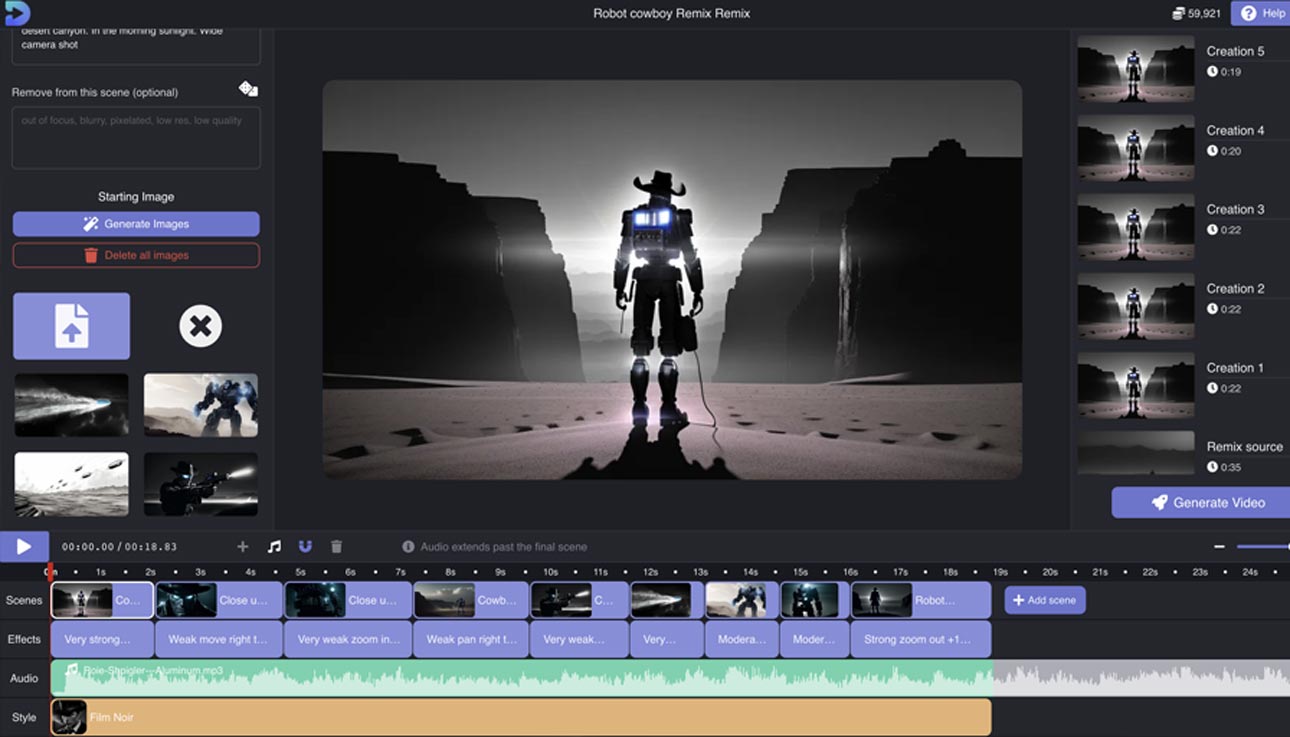

Decoherence

AI Animated video generator

Decoherence is a user-friendly AI video/animation generator that harnesses Stable Diffusion technology for creating high-quality animated content. It simplifies complex processes for creatives by offering an intuitive online interface.

You can start projects by using text prompts to generate images, combined with scene styles and motion prompts, to create animated clips. While you can use your own images (i.e. from MidJourney), the best results come from platform-generated ones.

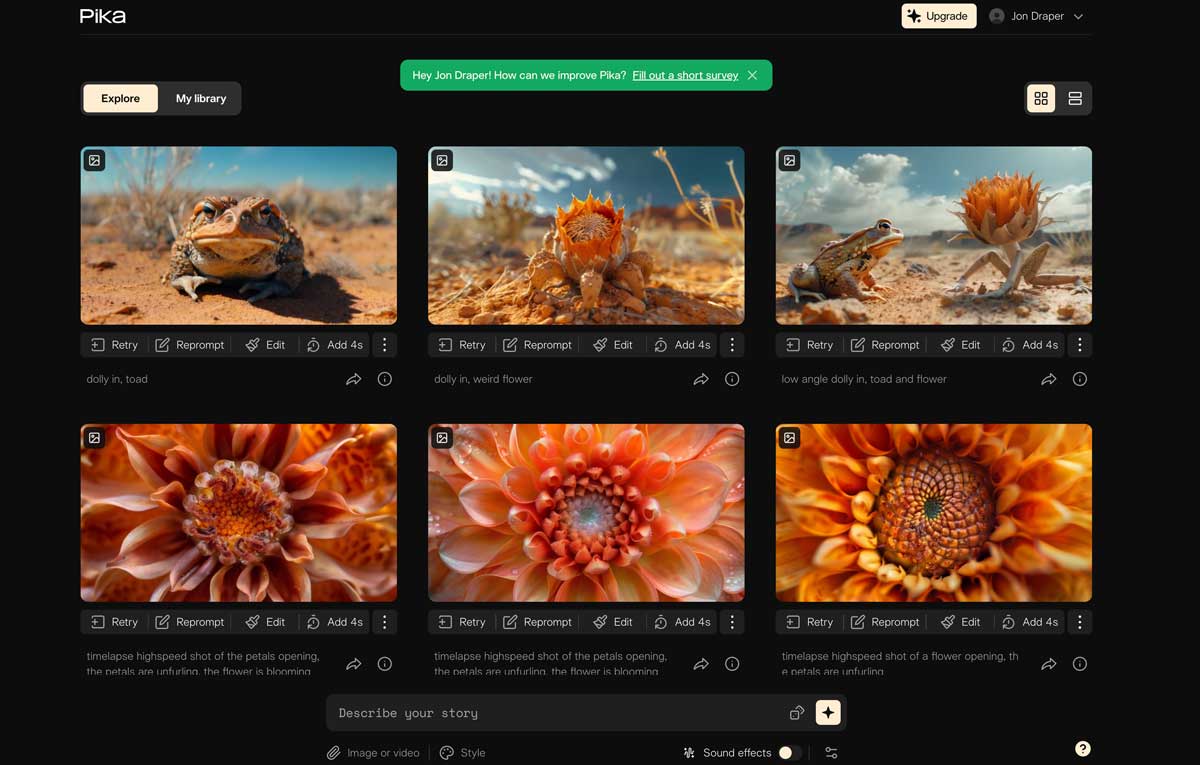

Pika

One of the early successes in the AI video space. Pika has a great UI for video creation, allowing you to quickly iterate and try out different prompts, image references and aspect ratios.

Whilst the current quality has been surpassed by competitors, we suspect Pika will roll out an uupdate of their own soon to put them back in the pack with the leaders in the AI video generation space.

Zero Scope

Zeroscope is the only open-source text-to-video AI animation tool we've seen so far. You can use it (at the moment) by setting it up as a model on the website Hugging Face.

It creates 16:9 clips based on text prompts. The output is not yet as good as that produced by Runway ML (*yet). But the potential is clear, plus you can use Zeroscope XL to upscale generated clips.

Whilst you can supposedly use Zeroscope for free, to get quicker out we found it's better to pay for a high-spec GPUon Hugging space to run the model on your own account and produce 3-second clips in just under a minute.

It's still cheap compared to competitors but at the moment the quality of output isn't great. But it is evolving very quickly.

Kaiber

Creating a different style of motion, Kaiber allows you to create images based on an image and/or text prompt to create an animation which often has a dream-like quality.

It was one of the first AI video/animation generation tools we started using ourselves before looking at some of the above tools.

Stylised videos created using Kaiber have been seen across social media as people generated these recognisably flickery/stylised animations. It has been used successfully to create stylised music videos and otherworldly clips.

In time, it will be interesting to see if more control and consistency can be created using Kaiber.

Other AI Animation Tools

D-ID - Animated Faces

One website AI animation tool that is coming to the fore is D-ID and their AI-powered character animation software.

Whilst you can quickly hop in and have it animate one of their provided avatars as its face comes to life and speaks the provided text.

A more exciting feature is the ability to submit your character artwork (i.e. something generated with MidJourney) and have it brought to life.

The results (with their voiceover generation) are a little uncanny valley. You could then potentially integrate that (composite) with some movement in a scene produced through other AI tools, or more likely using Adobe After Effects and more traditional digital animation techniques i.e. masking, puppet tool, faking some parallax and keyframing motion.

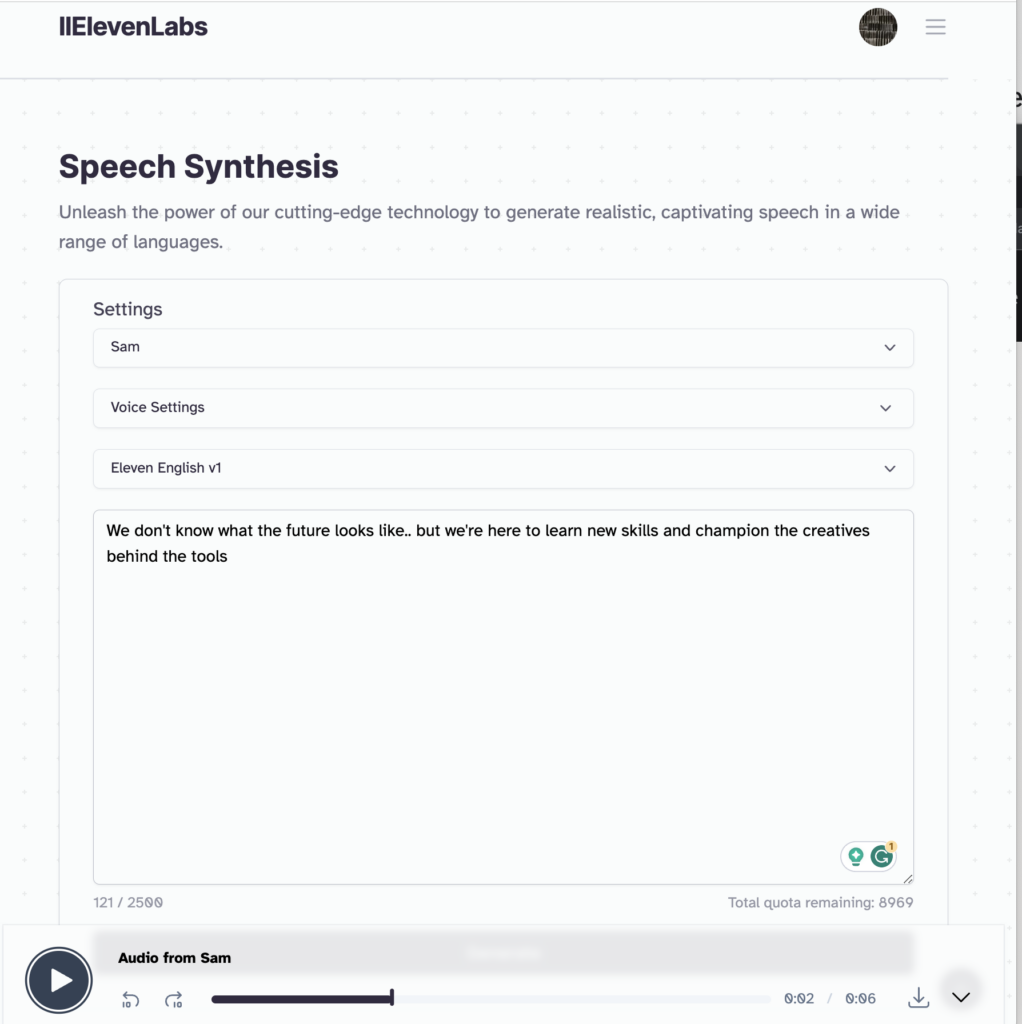

ElevenLabs - AI Voiceover Generation

Whilst there are quite a few AI voiceover generation tools now available. The one which we're seeing wide acclaim for and used in our own site promo recently is from ElevenLabs.

You can very quickly, and easily provide your voiceover script, select from an array of voices and have it generate the voiceover for download in seconds.

The output really is very impressive and once registered you can tweak the settings a little to get a different style of output. Whilst the voices do still lack a slight real human quality, the results are more than good enough for a lot of projects. So ideal if you're looking to generate placeholder (or permanent) voiceovers for an animation project where you need one or multiple voices.

With a bit of effort, you can also train a model to let use your or someone else's voice to automatically bring your scripts to life.

You can try out their service for free and the monthly costs (if you're producing regular content) is very affordable.

Can you recommend any other leading AI tools to assist in animation?

Find AI Animation Work

Ai Animation Creative Profiles

Register as an AI creative for free at AIAnimation.com and build a profile with video portfolio, bio, budget ranges, core animation skills, site links and more.

A site to showcase creatives using the latest AI tools and techniques to create professional animation.